Why this matters: With AI voice cloning and video deepfakes rising, you are the last line of defense against devastating financial and reputational fraud.

🚨 Attention Activity: The Urgent Call

Imagine this scenario: You receive an urgent, unrecorded video call from your CEO. The voice sounds exactly right. The face looks real, but there are slight blurs around the mouth. They demand an immediate, confidential wire transfer to a new vendor.

What is your immediate response?

🌐 The Threat Landscape

Digital impersonation isn't just basic phishing anymore. The threat landscape now includes advanced synthetic media designed to induce unauthorized financial transfers, credential disclosure, or reputational harm.

Key threats include (Hover/Tap to reveal details):

Executive Deepfake Video

The Threat

Algorithmically fabricated video streams mirroring physical appearance and mannerisms, designed to bypass visual verification.

AI Voice Replication

The Threat

Cloning an executive's voice accurately over phone calls to urgently authorize wire transfers or data extraction.

Advanced BEC

The Threat

Sophisticated spoofing of internal communication vectors, blending seamlessly with known organizational context.

💡 Knowledge Check

Which of the following scenarios represents a high-risk synthetic media threat under this SOP?

🛡️ The Golden Rule: Identity Verification

No financial transfer, payroll modification, or confidential disclosure can be executed solely on the basis of email, video, or voice instruction.

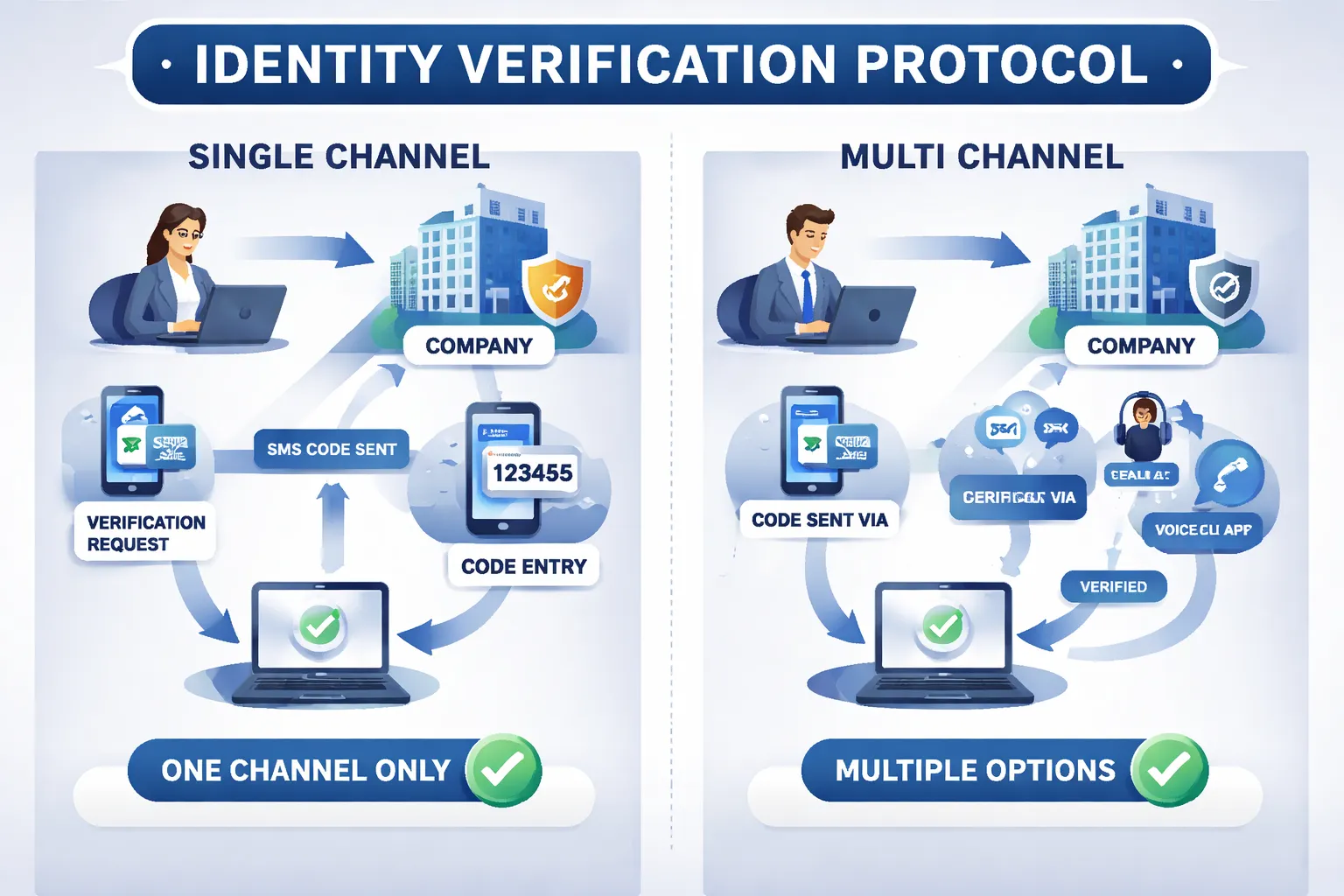

All high-risk requests require multi-channel verification. You must perform a documented callback using a pre-validated, trusted contact channel. You must also obtain independent managerial confirmation. Never rely solely on the channel where the request originated.

Failure to adhere to this callback verification is a material policy violation and jeopardizes organizational security. Even if the request appears to come directly from the CEO, verification is a non-negotiable requirement.

🔐 Layered Defense & Protection

We rely on both human vigilance and technical controls. The organization deploys layered anomaly-based email filtering, DMARC/DKIM protocols, and multimedia forensic analysis.

Internal Safeguards

(Hover to reveal)Human & Tech Fusion

Executives and finance-authorized personnel receive enhanced protection, including continuous identity impersonation monitoring and advanced training against synthetic media.

Third-Party Accountability

(Hover to reveal)Vendor Enforcement

Our vendors who have access to enterprise communications or brand assets are also contractually bound to implement these strict anti-impersonation safeguards.

📢 Incident Reporting & Governance

Time is critical during an attack. All suspected impersonation, deepfake, or social engineering attempts must be reported immediately to the Information Security Team.

The following protocols must be adhered to during an incident:

Employees are strictly prohibited from responding to external impersonation attempts without direct coordination from Corporate Communications and Legal. Silence is the safest first response.

Violation of these verification and reporting procedures jeopardizes the entire organization and may result in disciplinary action up to and including termination.

📝 Key Takeaways

- Never Trust a Single Channel: Deepfakes and AI voice cloning can mimic executives perfectly.

- Mandatory Callbacks: High-risk financial/data requests require documented multi-channel verification via trusted contacts.

- Report Immediately: Suspected attacks must go directly to Information Security.

- Stay Silent Publicly: Do not engage with impersonators or speak to media without Legal/PR approval.

Protocol Verified

Knowledge Assessment

You are about to begin the final assessment to certify your understanding of the Digital Impersonation & Synthetic Media SOP.

Please enter your full name below for your certificate:

You must score 80% or higher to earn your certificate. You will be tested on threat identification, verification rules, and incident reporting.

Final Certification

Assessment (Question 1 of 3)

You receive an urgent email seemingly from the CFO requesting an immediate change to a vendor's banking details. What is the REQUIRED next step?

Assessment (Question 2 of 3)

If you suspect a video call or audio instruction is actually an AI-generated deepfake attempt, who must you report this to immediately?

Assessment (Question 3 of 3)

If an external impersonation attempt goes public on social media, what is the required employee response?